I actively research real-world authorization flaws and privacy boundary weaknesses in large-scale platforms. While testing Instagram’s group chat permission model, I discovered an interesting edge case involving block relationships and group membership logic.

The Privacy Expectation

Instagram clearly communicates in its settings:

“You can be added to group chats by everyone, except by people you’ve blocked.”

From a user’s perspective, this is straightforward.

Blocking someone should terminate all interaction channels — including being added to group chats.

The interface reflects this correctly.

But I wanted to understand whether the enforcement also held strong at the backend level.

High-Level Technical Walkthrough

To evaluate this, I conducted a controlled test between two accounts:

- User A — The account enforcing the block

- User B — The account later blocked

1️⃣ Initial Interaction

User B created a group chat and added User A.

While this legitimate action occurred, the membership request was observed through a proxy tool to understand how the backend processes group-add operations.

At this stage, everything behaved normally.

2️⃣ Relationship Change

Next:

- User A blocked User B

- User A exited the group

The UI now prevented User B from re-adding User A.

As expected.

From a privacy standpoint, the boundary appeared enforced.

3️⃣ Replaying a Previously Valid Action with Some Race Action

Instead of attempting the action via the interface, the previously observed membership request was resent via a certain Race condition.

No UI interaction.

No visible permission flow.

Just re-triggering the earlier group-add operation.

Result

User A was added back into the group conversation — despite the active block relationship.

This demonstrated that while the UI respected the block rule, the backend did not appear to re-evaluate the relationship state during execution of that membership action.

Security Insight

This behavior suggests a gap in dynamic authorization validation.

In systems where permissions depend on real-time user relationships (like blocking), access decisions must be checked at execution time — not only when an action was first initiated.

If relationship state changes (like a block), previously valid actions should no longer succeed.

Why This Matters

Blocking is often used in situations involving:

- Harassment prevention

- Personal boundaries

- Safety enforcement

- Abuse mitigation

If a blocked user can indirectly reintroduce contact via group mechanics, it weakens the trust model of the platform.

While exploitation requires certain conditions, the privacy expectation remains clear:

A blocked user should not be able to re-establish interaction channels.

Suggested Mitigation

To strengthen enforcement:

- Perform real-time block relationship validation before processing group membership updates

- Reject group-add actions when a block relationship exists in either direction

- Ensure membership mutations are context-aware of updated relationship states

Severity Justification

- No account takeover

- No data exposure

- No privilege escalation beyond intended functionality

However:

- Privacy guarantees are weakened

- Blocking trust boundary is impacted

- Harassment vectors could be amplified

Final Thoughts

Large-scale platforms like Instagram rely heavily on relationship-based authorization logic.

Even subtle inconsistencies between UI enforcement and backend validation can create unexpected privacy gaps.

Responsible disclosure helps strengthen those boundaries — and reinforce user trust at scale.

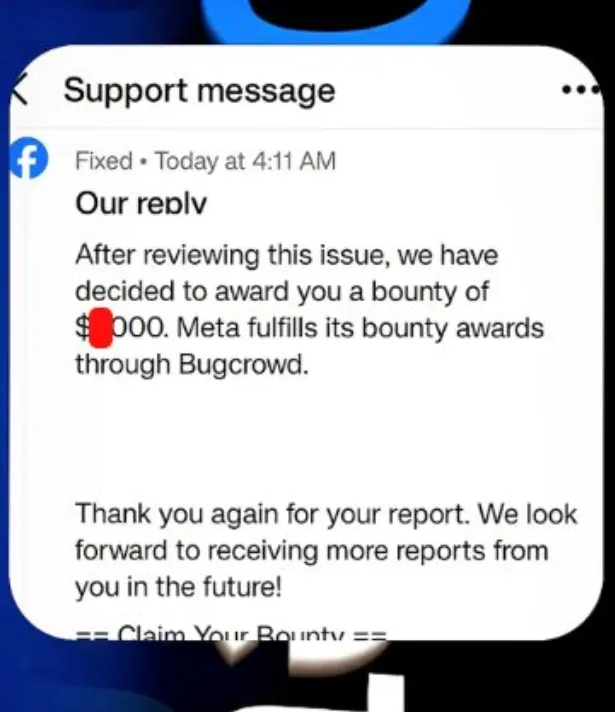

Meta Hall of Fame 2025

Recognised security researchers are listed here:https://bugbounty.meta.com/leaderboard/?league=&year=2025

🔚 Conclusion

This research highlights how even well-defined privacy controls on platforms like Instagram must be consistently enforced at every layer — especially at the backend.

While the interface correctly reflected the expected behavior, deeper testing showed that dynamic relationship-based permissions require strict real-time validation.

Strengthening these controls helps reinforce user trust and ensures that blocking truly functions as a definitive boundary — exactly as users expect it to on platforms owned by Meta Platforms, Inc..